Chips wrote:Not really. Your idea of discussion has no similarity to an actual discussion...

So, you're saying that I'm different? Unique? I'm special? I'm a special person?! YAY! Thanks!

Yes, it's a scattered conversation with bits of discussion in it. But, it's a complex subject, just like most "human" subjects. This is ultimately about predicting behavior and performance of humans in terms of learning and application of learned skills. "Learning" itself is... weird. It's so weird that we have all sorts of different orders and levels we've come up with labels for. Do animals learn? Some obviously do. Others, not so much. We can reliably predict capacity in some cases, but we still get surprised.

On forums, with lots of people adding their thoughts, even if it's only one-liners, there can come a nugget of insight that is truly extraordinary. You might make a particularly intuitive interpretation that changes the tides.. or not. You don't have to participate, but a voice lost is still a voice lost, with no opportunity to provide unique perspective or facilitate change.

kohlrak wrote:I think it's an ineffective test. Some humans fail to show this capacity, also. Do we analyze them close enough to know if it's empathy and not mere pity based on our sounds and expressions? Most people find it difficult to understand the simple behaviors of cats, such as "gifts" actually being hardcore insults.

"Pity" has its foundations in "empathy." "Fairness tests" are deemed to be accurate predictors of empathic ability since, in human terms, they must include the ability of the animal to interpret an internal state of another. Otherwise, the other subject is just an object and it's also assumed that there are no complex ritualized behavior or social standards being applied.

For instance, in a natural setting, a baboon that chooses not to take a juicy bit of fruit in deference to another baboon may not be acting in fairness, but is instead obeying the conventions of social hierarchy in the troop, deferring to a senior member. If they didn't do that, they'd be punished if they were discovered "stealing" this morsel. Is that fairness or an emphatically motivated response? No, it's perhaps something of a much higher and complex order.

Some tests are a bit more difficult to extrapolate to human behaviors and empathy. Contagious yawning, for instance, is often cited as a measure of empathy. But... it's never actually been proven that such observations are any indicator of empathy at all. IOW - Testing the true nature of what goes on inside a particular consciousness, if such a thing exists, is haaard. We have to avoid the pitfalls of our own "empathy" in humanizing animal behaviors.

Most certainly. So, we're on the same page. That said, it still needs to be said, simply because the disagreement seems to be the axiom. The axiom needs challenged and put in it's place. There are too many exceptions in too many things that show that the thing that we "can't put our finger on" is a bigger deal than we give it credit. As someone who's "slipped through the cracks," i'm especially annoyed with it.

We still don't know enough about "intelligence" to make very dependable predictions in regards to capacity or performance. We can say, however, that we can make "fairly reliable" predictions. Even if these are not completely reliable, they're valuable. We can predict, for instance, someone may need special instruction and we could develop a program that, if proven successful, could be of great help for such people.

(Note: We can make much more accurate predictions on capability when we're faced with issues relating to functional, physical, damage to certain regions of the brain that we have good evidence of their primary functions. "Lesion Studies" are really wonderful for exploring such things. For obvious reasons, they are entirely anecdotal in origin, but yield observations that are particularly predictive. (There are very few examples of ethical experimental lesion studies.) Very cool stuffs.)

IMO, there is a matter here of "what you invest in." Women tend to be "more socially competent," in general (the exceptions suggest it is probably more environmental than genetic, but whatever, can't go against the grain). Clearly they're more invested in the social aspect.

Are women more socially competent or are there cultures in which women assume roles that make them more socially competent?

As a young man, I once thought I had "women" figured out. Yup, I felt I had observed all categories of the female species and had managed to develop a set of rules that had predictive value. Of course, that was when I was young and age and true experience hadn't taught me that women are actually an entire different subspecies of humans, with their own unique behaviors that are practically unfathomable by males. This is, of course, by design, since it's women that rule the Earth and not men. If there were no women, our lives would be solitary, nasty, brutish and short, all of us still in the trees, jealously guarding our branches and throwing poo at each other....

Well, to narrow it down and be more blunt about what i'm trying to suggest: since both white and black culture is cohabitable (to some degree) in the US, the lower average IQ of blacks in the US seem to suggest to me that the low IQ blacks raised by white people "felt the need" to "feel black," thus adopting the culture, which is easy to do given the accessibility of the culture within the US. If i'm right about culture being the cause, this is worth considering, no? How many white people raised in "black communities" adopt the "black culture" and ultimately end up the exact same way? I think we missed an important control in the experiment. To be fair, it's hard to make a control for. That said, the unpopularity of the topic makes it harder to get more studies done without the control which would help expose the need for said control. This is one of those cases of "if you don't like the results of the studies or experiments, you should let them continue, since they'll end up exposing themselves." This was the basis for the Catholic Church being highly supportive of the scientific method: either they're in agreement, we interpreted something wrong in the scriptures (thus science helps us stay closer to our deity), or we did something wrong in the experiment (thus we need to do it again).

It should be said that there are differences in "sub-culture" not "culture." At least, in what I think you're referring to. Even so, even if we acknowledge that sub-cultures exist, a properly constructed IQ test will not bias against sub-cultures. Note that I consistently qualify my I.Q. test statements with "properly constructed." (

An interesting article)

Any test or measurement must do what it is supposed to do or its invalid, at least partially. What we have to consider is that an I.Q. test can be, at best, a partially valid test... It's not a measure of exact degrees. Instead, it's either

cold, hot or lukewarm, always, in terms of predictive value. It has quantitative results that are largely qualitative in predictive value when applied outside the test, itself.

A few things I'd consider fairly accurate:

Cultural differences could have an impact on the results, but aren't destined to always have an impact.

Language skills are generally considered to be a good general indicator of human intelligence. Languages are "cultural" in origin, but often share some similarities. (The verdict is still out on the concept of "Universal Language.)

Genetics does appear to play a strong role.

Socioeconomic status is not a good predictor of intelligence in regards to specific individuals and their performance.

"Race" has a specific definition based in genetics. However, "Race" can sometimes have culturally attributed definitions that are wildly inaccurate.

(Side-note: I think the impact of sexual selection in terms of human evolution has been overlooked until relatively recently. It is very clear that this has played a strong role in human development and that it still represents a strong influence. It's possible that could be applied in discussions regarding socioeconomic, race, subcultural differences, given that genetics still represents a strong indicator of performance in terms of "intelligence." Sexual selection appears to be strongly influenced by culture and social factors.)

...On the other hand, I'd suggest culture affects attitudes, and attitude towards an IQ test really can have heavy effects on the results, just like the presense of female body parts that are more interesting than the test.

An observation - "Attitudes" regarding I.Q. tests can undoubtedly have an effect. The proof for this is easy to see in the acknowledged effects of "test anxiety" (performance anxiety) which is a very real thing. So, in effect, we can certainly say that a subject's perspective regarding the test or the experience of taking it, itself, is a factor. Whether that applies to cultural bias against such tests impacting scores would have to be examined. (I haven't delved deep into these aspects in a long time, so don't know much about any recent discoveries. At the time I did, this sort of thing was acknowledged to have an impact on performance.)

That alone would be far more reliable, yet English education today discourages that. Students often get marked down for things such as "i feel that" or "i think that," since everything you say must be a fact, even if it's opinion. This is causing alot of political trouble. That said, people have a hard time judging success when they see it. Who's more successful, a man with a happy wife and kids, but doesn't have enough money to buy a new car, or Robin Williams before he committed suicide?

Measuring "self-actualization and quality of life" in terms of some sort of quantitative scale will always be futile... We often attempt to measure it in terms of socioeconomic status and professional performance, but those are often extremely poor predictors. There are some general indicators that appear to show economic status as having some predictive value. BUT, as that can be influenced by a great many things, it's not something that can be applied across a wide spectrum. We can't say rich people are always happy and poor people are not nor can we say that people consider themselves "successful" (Self-actualization, in general) based upon their socioeconomic status. We can say "money" does make people happier, the more of it that they have. But, money does not equate to happiness.

Is it the test that is biased against culture and/or race, or is it race and/or culture that is biased against the test (or testing in general)? The latter cannot be solved, which is my very prediction for the results that we see.

It's more about how we define intelligence than anything else. IOW - It's about "Are we truly measuring what we intend to measure." It's always about that.

If we take a properly constructed human I.Q. test developed in the United States and give it to a Sudanese tribesman, he should be able to reliably complete it and his score should have some predictive value. Should. Then again, it's more likely he's going to say "wtf is this $!47" and hand it back to us... It's likely that's a true "cultural" difference that would have to be accounted for. If we give the same test to anyone in the U.S., would their "sub-cultural" differences come into as strong a play? Maybe, maybe not. How extreme are those sub-cultural differences and were they accounted for?

... To be honest, I don't think there's anything wrong with the test, other than that rather than seeing that something needs fixed in education, it's becoming a method to decide which students should and should not be invested in. I can demonstrate empirically that we should look to the former rather than the latter.

I can teach any human, provided they are not severely handicapped in a way that precludes it, to do anything that is within the spectrum of human capability.

All I need is the time to do it coupled with enough voltage...

But, we desire to avoid building giant Skinner boxes and printing "School" on them, right? /sigh Oh well, if we're going to have those sorts of constraints, I might as well not even try.

So, instead, what do we do? We decide to forego voltage and food pellets and, instead, we use "grades" and "expulsion!" It's brilliant!

In truth, it's not a bad system. It works. It has been proven to have value. But, what we say we want is to produce students that are not only capable of earning food-pellets, but are capable of devising ways to escape the box by creating new and innovative things. How much of that can be taught by using our some-ol' box?

If we want to teach innovation and creativity, then we actually have to introduce those things to students in ways that allow them to be innovative and creative. We've been chasing "innovation and creativity" for eons. Why? They're extremely valuable things, of course.

But, innovation and creativity in individuals, as we define such things, seems to appear only a few times, here and there, and often not within the same person. An individual, it seems, can only hope to have one truly innovative and creative moment in their lives. The rest of their life will be spent trying to reproduce it, often without success.

For an individual, teaching them so they can attain that one, singular, moment isn't likely to yield consistent rewards over their life-time. For a society or culture, however, it could have very strong, repeated, positive impacts and that's why we, as cultures that value such things, pursue it.

In short, if there is such a thing, what do we want to accomplish and what do we value in our cultures?

A culture that values independence, freedom, the rights of the individual, and respects individual achievement will, most likely, value teaching innovation, creativity and individual perseverance.

BUT, at least in the US, we have a long tradition of using public schools to turn out the exact opposite of this. We have often relied, entirely, on private institutions and advanced education (colleges and universities) to supply these exceptional... exceptions. Getting a generic student from a public system interested in conformity to a low standard to an institution that prizes individual excellence... has been problematic. Historically, being the intellectually elite, or the opportunities afforded to improve one's intellect, have almost always come to those with the economic capability to take advantage of such things. That is changing in the modern day, but it still lags behind where we have determined we want it to be.

...It seemed more like some people just aren't as interested as they thought...

And, the ranks of hopeful candidates swell for those desiring to become astronauts. Until they realize how difficult it is.

Counseling, always. The college Freshman is typically a young person, with a young-person's understanding, and a young-person's desires. They may not know what they "want." And, if they do, how many actually stay in the same degree programs through to graduation? And... why? Do they want to be there or do they feel they just have no place else to go?

For those who truly want it, inspiration is still possible and they can discover new motivations that are positive and enticing. For those who are hesitant or just don't seem to have enthusiasm for the subject, they could find themselves much more rewarded by another discipline or even an associated discipline outside their current field of study.

It's up to the teacher to be a good counselor and advisor to their students as "students," not just as "prospective practitioners of xx discipline." Counseling and help can't just be limited to a once a semester visit with their faculty advisor or department chair. They're usually young persons, just at the latter stages of adolescents and the adults in the room still have to be good stewards, just like they were when gathered around the campfires in ancient caves. It's their responsibility to offer guidance.

There's the rub: I don't think proper instruction is the end goal. I think "saturation" and "covering my rear" are the goal, and they're incompatible, oftentimes, so i'd say they're mutual exclusive as well. So, the ones who focus on saturation tend to "dumb down" their material or "create paths to success" ... "Covering my rear" tends to mean that we're willing to change our material or the way we present it, in such a way that the proper education is next to impossible....The more you attempt to "one size fits all," the more you end up with gifted students being average, and what should be average probably still doesn't even learn. Teach properly to the gifted ones, give extra help to the problem students.

While this is true, institutions must still have a means to judge the effectiveness of the instruction they provide. And, it's most desirable to do that before any errors could cause too much damage. So... you've got quarterly reports, reviews, lesson-plans, yada yada yada.. How do we judge the efficacy of a program of instruction that isn't standardized or that proposes dynamics that are difficult to track?

Yes, you're completely right, a teach must tailor their instruction for the individual student where necessary. This is why true teaching is hard work.

"Fake Teaching" is showing up every day to talk and then to give some tests to some people that keep showing up to listen to you talk...

The solution to the problem of individualized instruction has historically been met with reductions in classroom size and decreased workload. In general, this is effective in preventing failures, but it can't produce exceptional successes by itself.

Here's the evil djinn in the bottle - Should students that are recognized as being truly exceptional by their teachers be treated differently, including being moved into advanced programs of instruction that focus on their individual, exceptional, skills? (Advanced curricula, Advanced placement courses, etc)

Should a student that excelled at the test in the OP be granted access to advanced programming courses if they wish it? Courses that others may not have immediate access to?

A culture that would seem to value producing exceptional individuals would seem to answer "Yes." BUT, then again, cultures value stability, too, else they can't function as "cultures." Dissatisfaction, feelings of alienation or ridicule, being denied opportunities... these things have to be addressed as well. So, what's the answer? Difficult.

But, we did. Saturation seems to be the dominant focus.

That's little more than throwing a bunch of coins in the air and then declaring that the ones that don't fall to the ground are the ones that the other guy can keep... Students aren't sieves. I hate that imagery. But, correctly identifying what anyone really likes or what they truly have an aptitude for is difficult.

Mork's, "The Secret of Women", Rule # 18, states: "If you are trying to determine where your date, girlfriend, or wife wants to go to dinner, don't start by asking her. Instead, suggest a place that you think is likely or desirable and, if faced with a negative response, suggest a place that neither of you have ever gone. At worst, she'll say "No." And, if she does, she'll likely be prompted to suggest a place. Or, you can do the really smart thing and just take her wherever you want, since all she really knows is that she's hungry and doesn't care where the food comes from."

If I gave you everything, where would you put it?

I'm in favor of broad general exposure to a wide variety of subjects. Nobody can decide if they're interested in something if they've never been exposed to it. But, if we're not interested in producing a large number of exceptional generalists, then we have to start specializing at some point ... or we have to extend the period of formal education.

(Note: There are very definite merits associated with producing "exceptional generalists" and multi-discipline degrees. However, that is only if those individuals can sustain themselves long enough to apply those skills and knowledge. And, they have to be in an environment that rewards and welcomes that in order to be successful -

Forbes: Charlie Munger )

... Not everyone approaches the same problems the same ways, which is why some students are better at some things than others. We've simply accepted that this is normal and representative of potential, rather than trying to figure out why. Increased educational spending seems to be avoiding this.

We have social constraints and we have desired outcomes. Sometimes, they're not compatible. What we have to have are effective compromise positions and programs. The most effective are those that reduce the failure rates and have an acceptable frequency allowing gifted individuals to excel. At the very least, such programs should prevent "cracks" in which students that would otherwise be promising fall through into academic obscurity or worse.

Throwing money at things tends to help, but it can also artificially reinforce inefficient programs that need to be more tightly focused.

Which, i guess, is the justification for ignoring outliers?

Outliers wouldn't be ignored if we were concerned with normalizing them. We're not. We tend to view "outliers" as desirable in terms of human individuality. If we didn't, then outliers of all sorts would be simply given enough voltage until they were no longer outliers...

We are catching up to the idea that we need to provide more rigorous courses and programs, and by that I mean some sorts of programs with "rigor" and formal structure to them, to students that are exceptional on both ends of the academic spectrum. Keep in mind that "modern" (contemporary) education is a recent development. Not long ago, those at the left end of the spectrum, no matter the reason, would be funneled into "trade" skills ("Shop" Class) or worse, dumped into the shallow-end of the pool to be forgotten and the only ones that had access to higher quality education were only those that could afford to pay for it.

But as we improve, should we not reattempt to address certain factors and further simplify this?

Yes. But, as soon as you say "simple" in terms of a person's behavior, you're gonna have a bad time... I am a special snowflake! (Chips told me so, above! <let's see if he read this>) So, of course, nothing about

me is simple.

We need to strive for efficiency, perhaps, more than simplicity. It's usually the same thing, in the end, but establishing the avoidance of complexity as a general rule isn't, usually, the easiest path to creating efficiency. What's the rule? "You can have it fast, good or cheap. Pick two."

Dig much deeper into this guy. ...I hope he took the advice. Unfortunately, I doubt it. Ultimately, it should have been motivation for him to look at the underlying problem, but instead he probably wrote it off as "it's impossible to control oneself in every situation, so go entertain yourself, you pompous false-friend," right?

Pretty much, correct. Obviously. (History of some behavioral issues, difficulty with relationships/interpersonal, general anger-management issues, etc.)

Here, it was somewhat offtopic, but I couldn't help pointing out that one single experience can make a huge difference in a person's life. Just one. Just one instance of something happening can have lifelong consequences. That's true for adults and children, but children especially, since that one instance is always going to be the very first time they've ever encountered it. It could be particularly damaging or it could be tremendously rewarding. In this example, it's obviously the parent's responsibility to make whatever it is a rewarding experience for a child. For teachers at all levels, this is also true - Their students will be encountering subjects for the first time.

A 100-level class doesn't need to drone on about the most boring crap that discipline has to offer... It's not there to shove inane crap into student's heads that nobody in the discipline cares about anymore. By week two, the professor should stand up and announce

"OK, class, I've covered all the boring crap that nobody really gives a crap about, anymore. Read over chapter three, entitled "Crap nobody cares about anymore" to discover more of it and write a three page paper telling me what the most boring bits were about, if you want. If you find this interesting, which is certainly possible, you can sign up for "Interesting Boring Crap About <discipline> lvl 304", advanced studies class. Now, for the rest of this introductory class, we're going to be talking about all the really fookin' cool-as-hot-crap-awesome stuffs this discipline is doing. It's gonna be fookin' cool and, on the overhead projector is the next really fookin' cool mystery this fookin' cool discipline is trying to solve! Tomorrow, we're going to shove half-a-liter of coffee into a research scientist in this field and he's gonna be hosted as a guest lecturer, to tell us about what he's currently studying and why it's so fookin' awesome-cool!"

So, how in the heck is a first-year student supposed to fookin' get interested in a discipline or be inspired to investigate it further if their first exposure to it is the most boring crap anyone in the discipline can think of. So boring, in fact, that any prof or graduate student that has nothing better to do in order to justify their existence can easily sign up to teach it?

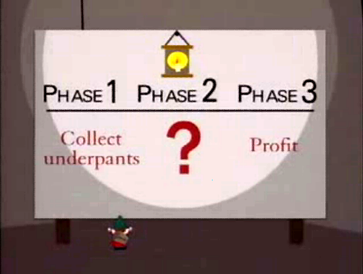

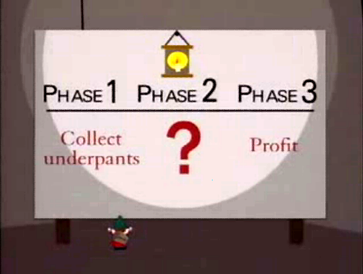

We do count on a student to develop an interest in something, right? That's why we encourage "saturation" in some things? Within a discipline, it's hoped that saturation + magic = knowledge.

Actually, we definitely understand to a much deeper level. The failure here, and the consistent failure overall, is that we try to increase the number of successful students by trying to eliminate difficulties, rather than trying to find out what makes things difficult and find a way to actually make them easier without avoiding the topics.

Are you assuming my... uh... level of difficulty with difficulty? !! Some things are actually difficult. So, how do we make them less so? The tendency to making things less difficult for someone to understand or to use is to make them as much "not like" what they are in deference to something "like" something the person already knows. (Allegory) The problem is the further we get away from what something really is, the less we're actually experiencing what it really is. Physicists shake their heads and moan whenever asked to explain a complex thing to a layman not because it's difficult to explain, but that it's difficult to

accurately explain to someone who doesn't already possess the knowledge necessary to interpret it.

Reduction is important. Reducing something to its constituent parts helps to avoid the complexity presented in the aggregate. But, how much reduction is useful in practice and what is it that one wishes to produce in terms of a finished, graduated, student?

Does a great chef have to know quantum physics? No. Would that be helpful for them? No. What about chemistry? Well, some form of basic chemistry would be helpful. Are the best chefs chemists? Some are, in a fashion, but grandma cooking the family recipe that tastes delicious probably isn't. It's helpful if they have some knowledge of chemistry, but it isn't completely necessary to produce "A Great Chef." Reduction, here, doesn't work well unless it's being used to make a sauce.

...Most failed immersion students are glued to their cellphones or depend on their dictionaries rather than trying to accept vocabulary from it's context....

Language studies are awesome, especially when dealing with children from multilingual homes. Apparently, such children freely interchange languages in their early years, being comfortable with both.

However, by around 9 or so, IIRC, they generally settle on a preferred language. (There are notable execptions, but they're very rare, IIRC .) For those who aren't continually exposed to this dual environment, their knowledge and use of the secondary language virtually disappears.

"I haven't studied that since my first year at University, so have forgotten it."

That's a common quip and the reason is the same as children forgetting most of what they knew of a second language, even in multilingual homes - Without continuing working, practical, exposure to some knowledge, we tend to lose proficiency. That's not a bad thing, really.

So, for assembly programmers and language developers, they "know" assembly and have a working knowledge of it. For certain software developers, they may have a conceptual knowledge of it. Programmers and general code-monkeys may have less knowledge, but could have some knowledge more focused on certain areas - Partial expertise. Some web developer or even a very specialized engineer may be so far from "assembly" that they don't even have a conceptual knowledge of it.

What do we wish to produce? What is necessary to do that? How do we do that efficiently? How do we make the knowledge and practical skills less difficult to absorb?

Each of those questions have to be answered in order to make an efficient program of instruction that is beneficial for the individual and, because it has to do with it, society as well. Some things may, indeed, include a foray into Assembly while some may only include requirements for the most basic expertise.

...I think they just see what they want to see. Murder is frowned upon universally, except some cultures justify capital punishment. Instead of seeing that we have inconsistent application and/or creat exceptions to the rules, they, who may wish to say morality is entirely relative (for obviously tempting reasons), may see that as a justification to say that some cultures support murder, and thus that cannot be a universal opinion...

Murder = Unlawful killing Aaaand, when we go further than that, the whole definition breaks down. I'm against "killing" as a general rule, no matter if the State reserves that right or not. The differences between human cultures is that each defines "lawful" according to its own conventions. But, they all define it in some way.

Yep. Always know what level of abstraction you need to be at. Do you need a ball, or do you need a ball that can bounce?

Stealing this... Stolded it.

...There's alot to be said about that. Or, rather, that alone says alot to the careful observer...

"Don't be deceived by the cover" someone once said. Yet, as humans, we are very attuned to the initial impressions of anything. That includes fatties, ugly people, dirty people, "other" people, blah blah blah...

We can apply that directly to this topic, though!

If a student's initial exposure to a new discipline is "bad", what's their opinion of that discipline likely to be?

I remember the days of eager students sitting in the "Student Activity Center" talking about particularly exciting things in their first year or so, sometimes later. They'd jabber on about "cool stuff" they'd just found out about a particular subject. They'd argue, with good intentions, and bring out coursebooks to reinforce their point. Seriously, this stuff happened. (Of course, we were allowed to smoke back then, so the whole place would be filled with it and some students probably didn't go there...)

The students that seemed to discuss their newfound knowledge the most, with the most enthusiasm, were always taking classes from the most well-liked teachers on the campus.

They weren't excited about taking classes from the easiest professors. They didn't care at all about professors and the classes that were notoriously boring renditions of "and then this happens."

IMO, one can't teach much to a student that lacks the internal motivation to learn it, no matter how a course is designed. If one wishes to truly chase the lighting in the bottle, then that is going to involve motivating students. And that means getting them excited about the discipline and interested in it as a meaningful, living, breathing, dynamic thing.

Do you want them to learn assembly? OK, then tell them they're going to create a spaceship capable of finding its way to Mars and that they are going to help make it happen by practically applying "Assembly." Or, a drone capable of navigating around a room, by itself.

Sometimes we don't point it out enough. The real issue is that we never seem to maintain the right level of abstraction/specificity.

Completely accurate statement. How much is "just enough" and is "just enough" ever actually "enough?" That applies directly to how much saturation, how much immersion, one thinks may be required. It also applies to how far an instructor can go and how much they need to motivate their students in terms of self-study. Or, motivation including practical self-study, which is always more complex than it initially appears - "This is Assembly. What do you want to do with it? OK, do that and I'll help you along the way." To the novice of any discipline, taking early knowledge and making practical use of it in that discipline always, always, involves stumbling across related knowledge in that discipline.

Absolutely. We need to learn how to appropriately apply the generalizations. Unfortunately, there are no general rules. What would be helpful is to further our understanding of why things don't fit the generalizations, since trying to make things fit the generalizations generally ends up horrible.

We are purpose-built, from the ground up, to generalize all experiences and knowledge. Every spider is poisonous, every snake a danger, every shark is... It's a fookin' shark, wtf?

It's a darned useful attribute. It can be used to learn very complex things, as well. But, only to a point.

Is the allegory or imagery of building some functional contraption out of Legos applicable to Assembly, for instance? (I have no idea, it's a serious question.) What about teaching students how an abacus works?

The point is that there could be ways that could identify students that can easily grasp the fundamental structure of something, even if what they show promise in isn't directly related.

I know a bunch of darn AD&D, 3'rd Edition, players that would make the most cutthroat attorney's you ever regretted seeing in a courtroom.

But, should they have all gone to law school? The majority of them went into computer-science related disciplines, by the way. Does that say something about their basic interests or more about badgering me with sea-lawyering up nonsense to get around whatever problem I had just presented them with? Or, perhaps, outlets for creative expression? Remember, you said programming was an art form, right?

Right.

The diagnosis itself means nothing. The consequestional results of that diagnosis is a different story. I was, at one point, medicated for it. Let's just say that, on one hand, it removed the symptoms that people in general cared about, but on the other it introduced more problems overall. The assumptions tend to make things worse, as well, especially when there are false assumptions on the method of action. That said, i can't blame that for everything that went wrong in my life, but I sure can blame it for a whole lot.

You'd be right, on all accounts. "Medicine" is really poison, just a beneficial sort of poison. Good medicine alleviates symptoms without causing reactions that are worse. A sort of positively-balanced poison...

I have very strong issues with how people who have any sort of neurological problem are treated by society-at-large. That includes those, like yourself, that may have diagnoses that really have nothing at all to do with people other than yourself. You've been diagnosed with ADHD. So, that might mean you need some tailored instruction or maybe even, at worse, some medication. It doesn't mean you're going to murder someone with an axe or are unsafe around their pets. I've got friends similarly diagnosed and I never knew that until they told me. Then again, I had a live-in girlfriend who was diagnosed with that and she tried to kill me with a piece of glass... wtf? Somehow, I don't think that was related.

The point is that we have a really big problem in our societies with dealing with this issue and it's one that should be fairly easy to solve. BUT, that whole "don't judge a book by its cover" thing and people instantly making generalizations that anyone who has some sort of issue like that is supposed to be treated just like they'd just stepped out of "Silence of the Lambs" and invited them to "dinner."

I'd also like to point out that the APA and many other such entities in other countries have a penchant for redefining things as knowledge, or political and social trends, dictate. What is "ADHD" today may not be the "ADHD" of tomorrow. It's extremely frustrating for all concerned, but that's "progress."

As a non-professional, unlicensed, non-practicing, degree holder with old knowledge and no structured continuing education in the matter and who is not attempting to provide any form of diagnosis, I don't think you fit the classic ADHD model that I was instructed under the DSM-III, back in the day. Then again, that model for diagnoses has changed, expanded, contracted and gone sideways. We're still learning. (IMO, we should gravitate towards more functional diagnoses dependent on neurological exam, FMRI, chemical testing, etc, as such knowledge improves so as to yield more useful diagnoses that aren't strictly behaviorally based.)

...Absolutely. Unfortunately, more animalistic cultures seem to be in danger of taking over, as this is in culture rather than human nature. There is a separation between "pedophilia" and "pedophilia." Milo Yianopolous pointed this out and, in effect, labled himself as a pedophile (ironically, those same people going after Milo won't go after certain other individuals [perhaps because they're on the same side?]). Physically, we're attracted to those who can reproduce. Mentally, we're discouraged from attraction with those whom may be capable, but still aren't ready, to reproduce. Not all cultures accept the mental discouragement, however. This is not just a bad thing for those within their own culture, but it can also be bad to all individuals of a rival culture...

This is directly applicable to the concept of social mores, specifically reproductive and "marriage" conventions as well as defining "incest." I realize this is a controversial subject for laymen, but these are real things in the subject of universal social mores and reproductive rights. Most exposure to the subject comes in Anthropology, the study of human cultures, but can also be found in Psychology and in Sociology.

The point being is that we construct definitions for things in human behavior that may also include intra-human behavior. "How a person interacts with others" or "how a person acts in society" are important to behavioral definitions. ie: Psychology, the study of human behavior. It also relates directly to concepts of "Sexuality" and how that is defined.

In short, which anyone reading this will be thankful for, there are obvious cultural influences regarding what are supposedly "human behaviors." If these behaviors are considered to be "abnormal" then they are considered as being a diagnosable "problem." Some diagnosable issues are only ever considered to be truly a diagnosable issue unless, and only, if that issue prevents someone from "functioning normally in society."

Seriously. "Does this behavior prevent this individual from functioning normally in society" is a guideline, or was back in the days of the DSM-III in studying Psychology in the U.S.

I can give a host of historical examples where otherwise formal, serious, "scientific" studies have impacted cultural and social bias and political policy. I'm not going to do that unless prompted, though.

The point is that social norms, cultural "rules", often dictate what is considered to be "rational" behavior. This is not always consistent and, obviously, when social convention changes, these supposedly "scientific" diagnoses get changed, too. They're actually considered "soft-science" for a good reason, but that term tends to make people think they're all somewhat invalid and that's not the case.

Cultural values and social norms impact definitions of "deviant" behavior. What amounts to a "headman" in Anthropological parlance, in some Middle Eastern cultures would be considered to be exhibiting perfectly acceptable behavior if they kept as a dancer and bedwarmer a young effeminate boy. But, if that was a girl? They'd be lynched by the tribe... WTF? In the US, in either case, they'd likely be diagnosed as being a pedophile, even if their sexual proclivity wasn't exclusive, was socially forced (There is competition to see who can attain the most attractive, "desirable", ones amongst various clan chiefs/leaders as a mark of social status), and was socially acceptable behavior in their own region.

There are elements of human behavior that are most certainly defined as being deviant or problematic based upon cultural norms. Some of these are changing as our culture changes, too.

(Note: I had never heard of Milo Yannawhatsits, so looked him up. There's something interesting to point out, here. He attended a "school for boys." There has been some discussion of a positive correlation with pedophilia, specifically same-sex, with early childhood experiences in same-sex institutions like "boy's schools." This was brought about during the uproar surrounding pedophilic priests and the notion that many may have attended monastic Catholic schools where there were no girls present during early puberty, etc. Some priests diagnosed, self or not, have also claimed this as an origin for their fixation/problem. Because "girl's schools" are very limited, usually to "troubled teens" defined by widely inaccurate means, I don't know if these as-of-yet-unproven assumptions can hold true. However, the frequency-rate between sexes is generally weighted towards men. The reason is not yet known, AFAIK. It has been awhile since I studied human sexuality in-depth, other than just whatever makes the newspaper. These days, one doesn't just start Googling about pedophilia. <Cris Hansen Meme Here>)

Given the source of the study as well as the purpose, I find it very unlikely that they did account for the medication. Especially because they boasted sample size, which means they most likely included medicated individuals.

I'd have to see the actual paper/study to be able to make any determination. I would hope that if they did, it would have been invalidated by now if that was a significant enough factor.

But, "brain size" is not really a significant determiner of human intelligence, by itself. There are specific cases where it is, but these are generally due to malformations, injury, otherwise already known severe circumstances.

I think we do know things, but we miss the mark because we get over anxious in what we know. I see so many theories and beliefs that are popularized and supported, that are based on things that are based on things that are based on things, and so forth, until you get to something with really shakey ground. .... Don't even get me started on quantum physics. Show me lying on my deathbed, and then i'll believe in your time travel.

There are hard sciences and soft sciences and whenever they meet, there's troubled sciences. Pschology is a "soft science" despite its use of lots of maths and experimental rigor. Psychiatry is a "harder science." They are not the same, despite having the same root, but they are directly related and study much the same thing.

Keep in mind the mystique of "human behavior" and the barriers that we are presented whenever we must attempt to pursue true knowledge regarding it - We can't peek into someone's head. Until we can peek, we are going to come up with all sorts of ideas regarding what "normal" is for human thinking "behavior." Functional Magnetic Resonance Imaging is a very, very, new tool that is helping to pave the way for more accurate dignoses of neurological conditions. However, how closely those impact behavior issues can't easily be established. We can't, for instance, point at evidenced behaviors as being directly attributable to some pattern we see in an fMRI. We most certainly can't point out "deviant thoughts" in an fMRI, either.

<I do see your train of thought, there, just edited quote for page-space.>

Is time-travel possible? Absolutely, as we're doing it now, right? Is it possible to alter the rate? Sure, it's easily possible to alter the "relative rate." Is it possible to alter the direction? Yes, it is, depending up on what set of physical rules are actually "true" and what actual limits in terms of energy density exist. But, where and when will you actually end up? That's still open for debate, depending upon who's idea of a Universe one is dealing with.

But what is "general intellectual capability?"

And now, the Rabbit Hole.

We define what normal intellectual ability is. Our culture defines that. Exhibited behaviors define that.

The most brilliant chimp at the zoo has figured out how to open its cage and visit a friend in another building.

There is no absolute standard that we can find for "intelligence." There are only relative standards. We can't sample the entire human population in order to establish a true "mean." We can, however, develop a test that we have evidence of practical validity and then gauge performance in regards to that test. That test yields basic, serviceable, results that have predictive value up to a point. It has useful value and we can benefit from knowing the results. We can, for instance, identify students that may have problems in school and help them trough specialized education. We can also identify those with exceptional performance and, perhaps, be more comfortable with accelerating their exposure to more complex subject matter.

In the end, though, it's only a rough predictive indicator of intellectual performance, if not outright ability. It's about predicting behavior that can be evidenced by performance, that's all it is. Whatever else goes on, the quality of someone's thoughts, their internal states, unique and valuable insights, all of that - Those can't be measured unless they are in evidence, right in front us in plain view in some observable way.

...Is it genes that make people fat, or is it that they inherited their eating habits from their parents' habits rather than genes? This can be solved with "twin studies," but general cultural things are a bit harder to get away from, since the general culture is more accessible.

Food makes people fat. I know this because if we take away food, people become less fat. Well, up to a point. There comes a point where frightfully obese people can die from "starvation" even with huge "fat reserves." Deny them enough of an important nutrient and systems will break down and cause death... it's that simple, no matter how fat they are.

We can't forget that it is we who come up with definitions for things. We don't come across their entries in some Universal Lexicon and then transcribe them into a usable format. Because of this, definitions for all sorts of things change. Also because of this, some things claim more validity than others when based upon a language other than "words." (Mathematics, I'm looking at you.) "Precision" in the communication of ideas is very difficult without the right language.

We have yet to invent the practice "Psychohistory" and psychology has yet to have found its Hari Seldon.

Regression is a concern, however.

Regression is only relative and we can't strictly apply some sort of qualitative state to it. What are our desired, if taken collectively, results? What do we want? If we decide what we want is "regression" then achieving that is "progress" isn't it?

Damn, I love words. They're so interesting.

... The test highlights that these concepts are not being learned as a result of instruction. To me, the situation is obvious: the instruction is failing to teach necessary concepts. The test adequately evaluates how well one understands the concepts, and yet the lack of change in values shows that instruction is mostly worthless. Yet, we refuse to see it that way.

If enough graduating student wear blue shirts, we may assume that the only students worthy of graduating are those who wear blue shirts.

There is a unique fallibility in a screening test. The more specific the test in its complexity and coverage of the screened for subject or ability, the less valid it is and the more like a diagnostic tool it becomes. Its purpose is to detect general indicators that may have positive correlations with a screened for attribute. If you want to know how excellent any particular student will be at learning "computer", you should give them a more appropriate test, like..."learning computer."

How well does such a test correlate with all instances of "learning computer?" Does failure preclude a student from being considered able to learn to program? Remarkable, if true! AFAIK, there are very few anythings in any discipline that can lay claim to predicting "truth." A "fact" may be that the student failed the test, but only the results of a course of study can prove the truth of its prognosis in that the student will be incapable of exhibiting the behavior of having learned.

Voltage and time... that's all that's needed to "teach." Or, maybe, a tailored program of study for someone who otherwise possesses the basic tools needed. They may not be outstanding programmers, but they'll at least be able to write code.

...It's easier to say "this person has no potential, thus i don't have to waste time on them" rather than "i suck at teaching."..

"I only want the easiest students to teach. Heck, just give me fookin' students that know all this crap, already... "

...It's not just an embarrasingly simple concept, but it's necessary. Something, here, just isn't working out for us.

What is "assignment?" What's the contextual explanation? OK, how does that function translate to something the person has experience understanding?

There are several infamous examples of this issue being brought up as cultural bias in IQ tests. "If Johnny drives a car to Jane's house..." WTF is a "car?" How in the heck does this woman own a house? What is "drive" and what are the functions that includes? Etc... So, then everyone goes overboard and says "Let's just use symbols and boxes and squares, yay" and entire cultures are dumped by the wayside because they see these symbols as representing something entirely different.

I am dubious as to the predictive value of a "Computer Programming I.Q. Test." I could see logic skills be significant. I could see that someone needs to understand how relationships can be assigned and formed. I can see how one need to understand how to apply a set of rigorous rules towards achieving desired goal. But, an entire "IQ Test" for computer programming aptitude? It's in the realm of "maybe" in terms of some predictive value, but it's far outside of "absolute."

...They are sick of applicants like this. Personally, i'm sick of applicants like this getting interviews when I don't even get an interview for a lesser position...

Jobs are a social thing, rarely awarded simply on merit and certainly not retained only due to individual performance. In today's markets, everything is remote, with little opportunity for personal interaction. But, it's not impossible to use social skills to retain employment if one has the necessary minimum qualifications already established.

Not familiar with Lorem Ipsum?

I've seen it before, just not fully quoted in a forum post.

It's babble and pointless to them, simply because they cannot follow.

I don't think so. I think that it's more likely that you and I aren't deterred by engaging in a discussion in such a way. There isn't any member here that I know of that couldn't understand any of this. Whether or not they're willing to read through it in order to make direct replies is another matter.

For myself, and perhaps I should have been diagnosed with ADHD, I can follow along exactly as you have intended, easily able to see how your statements relate to each other and where the inspiration has come from for what others may see as deviations from the topic. To me, it's plain.

Then again, my reputation is for exactly the same sorts of posts and rambling discourse, which many don't seem to favor. It's fine, I don't mind. The thing is, many topics that lay claim to being "focused" involve very complex things that are very "not focused" at all, but are widely influenced by things that don't often get considered, IMO.

(Mammoth post, sure to irritate many. /bracing for complaints)